前言

DeepSeek最近真的太火了,看了几篇教学都是用的ollama运行的demo,这篇笔记通过Python+HuggingFace运行跑一个demo。

第一次跑本地跑大模型,大佬勿喷哈。

安装依赖

代码解读复制代码pip install torch transformers accelerate uvicorn fastapi python-multipart huggingface_hub

下载模型

这里下载的是1.5B模型,其他可以参考:huggingface.co/deepseek-ai…

python代码解读复制代码from huggingface_hub import snapshot_download # 设置模型名称 model_id = "deepseek-ai/DeepSeek-R1-Distill-Qwen-1.5B" # 下载模型到本地目录 local_dir = "./models/DeepSeek-R1-Distill-Qwen-1.5B" snapshot_download( repo_id=model_id, local_dir=local_dir, revision="main", # 模型版本(默认 main) resume_download=True, # 断点续传 local_dir_use_symlinks=False # 直接复制文件,而非创建符号链接 ) print(f"模型已下载到:{local_dir}")

通过python运行,即可下载到本地。

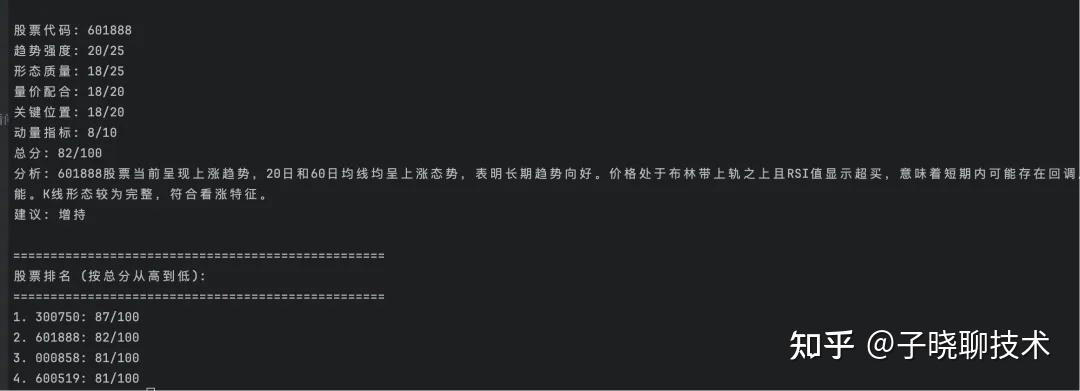

效果

有时候回答会比较飘,加些限制会好很多。

代码

代码分为两块,一个前端文件,一个后端文件:

- main.py

- frontend.html

后端main.py

python代码解读复制代码from fastapi import FastAPI, Request from fastapi.responses import JSONResponse from fastapi.responses import HTMLResponse from fastapi.staticfiles import StaticFiles from transformers import AutoTokenizer, AutoModelForCausalLM import torch app = FastAPI() # 模型名称 model_name = "./models/DeepSeek-R1-Distill-Qwen-1.5B" # 加载模型和分词器 tokenizer = AutoTokenizer.from_pretrained(model_name) model = AutoModelForCausalLM.from_pretrained( model_name, device_map="auto", # 自动分配 GPU/CPU torch_dtype=torch.float16, # 半精度推理(显存需求更低) trust_remote_code=True # 信任自定义代码(如果模型需要) ) # 提供静态文件(HTML/CSS/JS) app.mount("/static", StaticFiles(directory="."), name="static") # 返回前端页面 @app.get("/", response_class=HTMLResponse) async def index(): with open("frontend.html", "r", encoding="utf-8") as f: return f.read() @app.post("/generate") async def generate_text(request: Request): data = await request.json() prompt = data.get("prompt", "") max_length = 2048 # 生成文本 inputs = tokenizer(prompt, return_tensors="pt").to(model.device) outputs = model.generate( **inputs, max_length=max_length, do_sample=True, temperature=0.7 ) generated_text = tokenizer.decode(outputs[0], skip_special_tokens=True) return JSONResponse({"generated_text": generated_text}) if __name__ == "__main__": import uvicorn uvicorn.run(app, host="0.0.0.0", port=8000)

前端frontend.html

html代码解读复制代码<!DOCTYPE html> <html lang="zh-CN"> <head> <meta charset="UTF-8"> <title>DeepSeek 对话助手</title> <style> body { font-family: Arial, sans-serif; max-width: 800px; margin: 0 auto; padding: 20px; background-color: #f0f2f5; } #chat-container { background-color: white; border-radius: 10px; box-shadow: 0 2px 10px rgba(0,0,0,0.1); padding: 20px; } #chat-history { height: 800px; overflow-y: auto; margin-bottom: 20px; border: 1px solid #ddd; padding: 10px; border-radius: 5px; } .message { margin: 10px 0; padding: 8px 12px; border-radius: 15px; max-width: 70%; } .user-message { background-color: #e3f2fd; margin-left: auto; } .bot-message { background-color: #f5f5f5; margin-right: auto; } #input-container { display: flex; gap: 10px; } #user-input { flex: 1; padding: 10px; border: 1px solid #ddd; border-radius: 5px; } button { padding: 10px 20px; background-color: #007bff; color: white; border: none; border-radius: 5px; cursor: pointer; } button:hover { background-color: #0056b3; } .think-content { color: #666; font-style: italic; background-color: #f9f9f9; padding: 5px; border-radius: 5px; margin: 5px 0; } .loading-message { color: #666; font-style: italic; text-align: center; padding: 10px; animation: blink 1.5s infinite; } @keyframes blink { 0% { opacity: 0.6; } 50% { opacity: 1; } 100% { opacity: 0.6; } } </style> </head> <body> <div id="chat-container"> <div id="chat-history"></div> <div id="input-container"> <input type="text" id="user-input" placeholder="输入你的消息..."> <button onclick="sendMessage()">发送</button> </div> </div> <script> // 显示加载状态 function showLoading() { const chatHistory = document.getElementById(''chat-history''); const loading = document.createElement(''div''); loading.id = ''loading''; loading.className = ''loading-message''; loading.textContent = ''思考中...''; chatHistory.appendChild(loading); scrollToBottom(); } // 隐藏加载状态 function hideLoading() { const loading = document.getElementById(''loading''); if (loading) loading.remove(); } // 原有消息处理函数保持不变 function scrollToBottom() { const chatHistory = document.getElementById(''chat-history''); chatHistory.scrollTop = chatHistory.scrollHeight; } function parseResponse(response) { const thinkEnd = response.indexOf(''</think>''); console.log(thinkEnd) if (thinkEnd === -1) { // 如果没有 think 标签,直接返回原始内容 return { main: response, think: null }; } let parts = response.split("</think>"); // 提取 think 内容和主内容 const think = parts[0]; const main = parts[1]; return { main, think }; } function addMessage(message, isUser = true) { const chatHistory = document.getElementById(''chat-history''); const messageDiv = document.createElement(''div''); messageDiv.className = `message ${isUser ? ''user-message'' : ''bot-message''}`; // 解析消息内容 const { main, think } = parseResponse(message.replace(/\n/g, "<br>")); // 显示主内容 messageDiv.innerHTML = main; // 如果有 think 内容,用浅色标识 if (think) { const thinkDiv = document.createElement(''div''); thinkDiv.className = ''think-content''; thinkDiv.innerHTML = think; messageDiv.appendChild(thinkDiv); } chatHistory.appendChild(messageDiv); scrollToBottom(); } async function sendMessage() { const input = document.getElementById(''user-input''); const message = input.value.trim(); if (!message) return; input.value = ''''; addMessage(message, true); // 显示加载状态 showLoading(); try { const response = await fetch(''/generate'', { method: ''POST'', headers: { ''Content-Type'': ''application/json'' }, body: JSON.stringify({ prompt: message, max_length: 500 }) }); if (!response.ok) throw new Error(`HTTP error! status: ${response.status}`); const data = await response.json(); // 隐藏加载状态后显示回复 hideLoading(); addMessage(data.generated_text, false); } catch (error) { // 出错时也隐藏加载状态 hideLoading(); addMessage(`请求失败: ${error.message}`, false); } } // 回车发送支持 document.getElementById(''user-input'').addEventListener(''keypress'', (e) => { if (e.key === ''Enter'') sendMessage(); }); </script> </body> </html>